A/B testing is a powerful tool for optimizing products and improving the user experience.

By comparing the performance of two different product versions, you can determine which version is most effective at achieving your business goals.

However, A/B testing requires a systematic and careful approach to get reliable and meaningful results.

This step-by-step guide will walk you through the process of conducting an A/B test, from defining your goals and objectives to analyzing and interpreting the results.

By following these steps, you can use A/B testing to make data-driven decisions and continuously improve your product’s performance and user experience.

What is A/B Testing?

A/B testing, also known as split testing, is an experimentation method that compares different versions of a webpage or marketing ad campaign to see which performs better and is more effective in achieving specific business goals.

It is called A/B testing because there are typically different versions of a webpage being compared against each other. Where A can be the original version and B is a challenger version.

A/B testing has different categories, which include the following:

1. Element level testing: This is considered the easiest category of A/B tests because you only test website elements like images, CTAs, or headlines. This testing aims to create a hypothesis as to why the website elements need to change.

2. Page-level testing: As the name suggests, this testing involves moving elements around a page or removing and introducing new elements.

3. Visitor flow testing: This category of split test involves testing how to optimize visitors’ navigation of your site. Visitor flow testing is usually directly related to the impact on conversion rate.

4. Messaging: Yes, you have to A/B test the messaging on different sections of your site to ensure consistency in tone and language. Messaging A/B tests take a lot of time since you have to check every page and element for messaging consistency.

5. Element emphasis: Sometimes, in an attempt to reinforce an element, such as a button, headline, or CTA, we may repeat such elements too many times. This category of A/B testing focuses on answering questions like “How many times should an element be displayed on a page or throughout the website to get visitors’ attention?”

Benefits of A/B testing

The benefits of A/B testing are well documented. Here are ten advantages of running A/B tests:

- Improved effectiveness: A/B testing allows you to test different product variations and compare their performance to determine which version is most effective at achieving your business goals.

- Enhanced user experience: A/B testing allows you to test different design or feature changes and see how they impact user behavior, so you can optimize the product to better meet the needs and preferences of your users.

- Data-driven decision-making: A/B testing allows you to make decisions based on objective data rather than subjective opinions or assumptions, which can help you more effectively optimize the product.

- Improved conversions: A/B testing can help you identify changes that increase conversions, such as increasing the effectiveness of calls to action or improving the product’s overall usability.

- Increased customer retention: A/B testing can help you identify changes that improve customer retention, such as reducing friction in the user experience or making it easier for customers to complete tasks.

- Greater efficiency: A/B testing can help you identify changes that make the product more efficient, such as streamlining processes or reducing the number of steps required to complete a task.

- Enhanced engagement: A/B testing can help you identify changes that increase engagement, such as adding social media integration or improving the product’s overall design.

- Improved ROI: A/B testing can help you achieve a better return on investment by identifying changes that will enhance the product’s effectiveness and efficiency.

- Enhanced competitiveness: A/B testing allows you to continuously optimize and improve your product, which can help you stay competitive in a crowded market.

- Greater customer satisfaction: By using A/B testing to improve your product’s effectiveness and user experience, you can increase customer satisfaction and build loyalty.

When to Do A/B Testing

When should you use A/B testing? Well, there is never a wrong time for A/B testing. You should constantly be testing to identify opportunities for growth and improvement.

There are several situations in which A/B testing may be useful:

- When you want to test a new feature or design change: A/B testing allows you to compare the performance of your product/website with and without the new feature or design change, so you can see whether it has a positive or negative impact on user behavior.

- When you want to optimize a website for a specific goal, A/B testing allows you to test different variations of a website page to see which one performs best in achieving that goal, such as increasing conversions or improving user retention.

- When making data-driven decisions, A/B testing allows you to test different product variations and compare their performance based on objective data rather than relying on subjective opinions or assumptions.

It’s important to note that A/B testing should be part of a larger optimization process and is not a one-time activity.

It is often used in conjunction with other conversion rate optimization techniques.

What can you A/B Test?

When it comes to A/B testing, you have a wide range of options. Almost any element of your website or digital marketing campaigns can be tested to see what yields better results. Here are some key areas to consider:

- Headlines and Copy: The words you choose can significantly impact user engagement. Test different headlines, subheadings, and body copy to see which resonates better with your audience.

- Call-to-Action (CTA): Your CTA drives conversions. Experiment with different phrases, button colors, placements, and sizes to determine what prompts more users to take action.

- Images and Videos: Visual content plays a big role in user experience. Test various images or video thumbnails to discover what captures attention and drives interaction.

- Forms: Forms often play a key role in the conversion process. Try different form lengths, field labels, or even the order of fields to reduce friction and increase submissions.

- Navigation and Layout: How users navigate your site affects their overall experience. To make navigation smoother, test different menu structures, layout variations, and the number of steps in a process.

- Product Descriptions: The way you present your products on e-commerce sites can influence buying decisions. Test different descriptions, bullet points versus paragraphs, and the inclusion of user reviews.

- Pricing: Pricing strategies can greatly impact conversion rates. While this should be approached carefully, testing price points, discount offers, or bundling options can reveal what customers are willing to pay.

- Landing Pages: The design and content of your landing pages are essential in capturing leads. Test different headlines, copy, images, and CTA buttons to optimize conversion rates.

- Emails: Email marketing offers another area ripe for testing. Experiment with subject lines, email body content, send times, and personalized elements to increase open and click-through rates.

- Checkout Process: For e-commerce sites, the checkout process is key to securing sales. Test different layouts, the number of steps, payment options, and even trust badges to minimize cart abandonment.

Setting Up an A/B Test Using FigPii.

We can’t talk about A/B tests without talking about A/B testing tools.

There are hundreds of A/B testing tools in the market, but we recommend FigPii.

You also get access to other conversion optimization tools, such as heatmaps, session recordings, polls, etc., that can help improve your site’s existing conversion rate.

FigPii also offers a 14-day free trial with full access to all the features available on the platform.

With that said, let’s set up an A/B Test in FigPii:

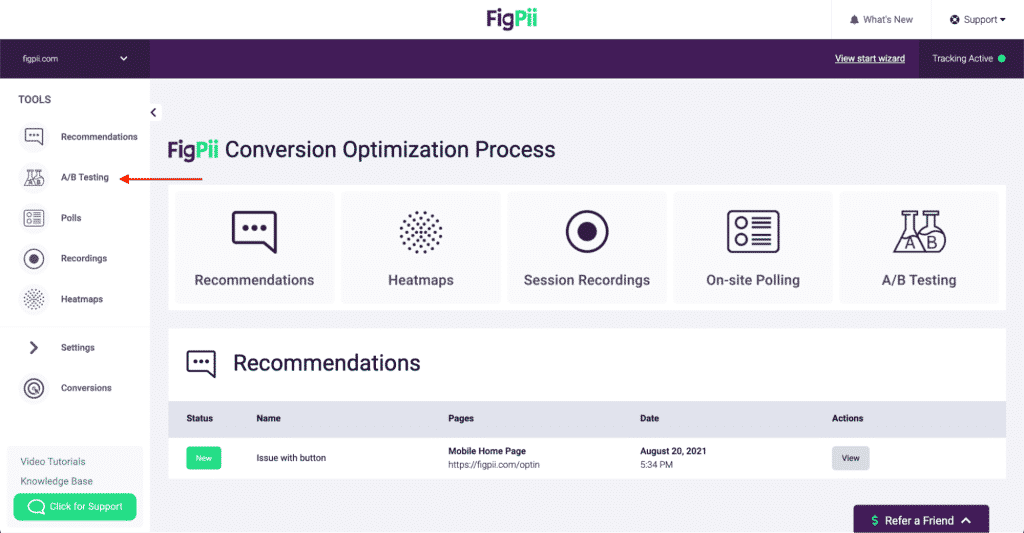

If you do not have an account, visit https://www.figpii.com/register/basic-info to register.

- If you have an account, visit www.figpii.com/dashboard and select A/B testing from the left-hand side of the page menu.

- Choose a name for your experiment and decide on its type: an A/B test or a split URL test.

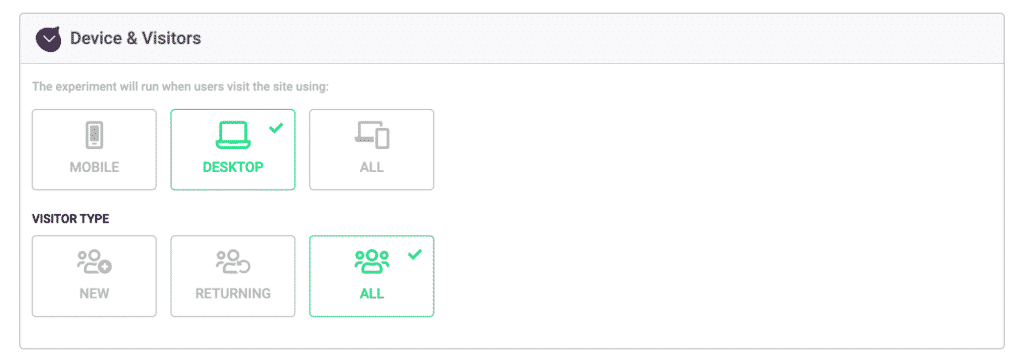

- If you want to run your test on multiple devices, Figpii allows you to test on mobile and desktop devices.

- You also have the option of choosing the category of audience you want to focus on.

- Running A/B tests with FigPii on your site requires adding additional code to your website. More details on how to do that here

-

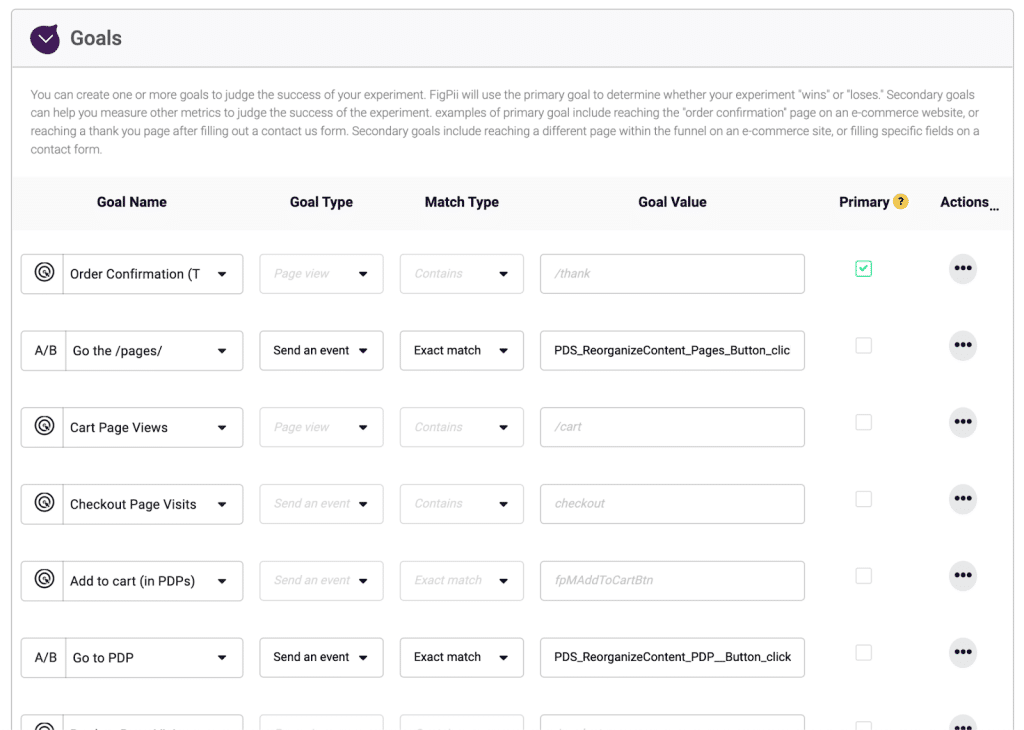

Identifying the goal of the test

When conducting an A/B test, it is important first to identify the goal. This will help you determine what you are trying to measure and what success looks like for the test.

Common goals for A/B tests include increasing conversions, improving user engagement, and reducing bounce rates.

By clearly defining the goal of your A/B test, you can ensure that you can accurately measure and evaluate its success.

When defining your goals, remember that you can have more than one.

However, there has to be a primary goal and other secondary goals.

Your primary goal is the main metric you want to achieve by running the test, and your secondary goals focus on providing additional insights into user behavior.

Examples of primary goals can be conversion rate, bounce rate, cart abandonment rate, and click-through rate. Secondary goals include category/subcategory page views, add to cart, check-out page views, etc.

FigPii will use the primary goal to determine whether your experiment “wins” or “loses.” Secondary goals can help you measure other metrics to judge the experiment’s success.

-

Choosing the elements to test

Not every element should be tested because not every element can impact your conversions or user experience.

Once you have defined the goal of your A/B test, the next step is to choose the elements of your product that you will be testing.

This could include design elements, such as the layout or colors, or functional elements, such as an e-commerce site’s checkout process or an app’s navigation menu.

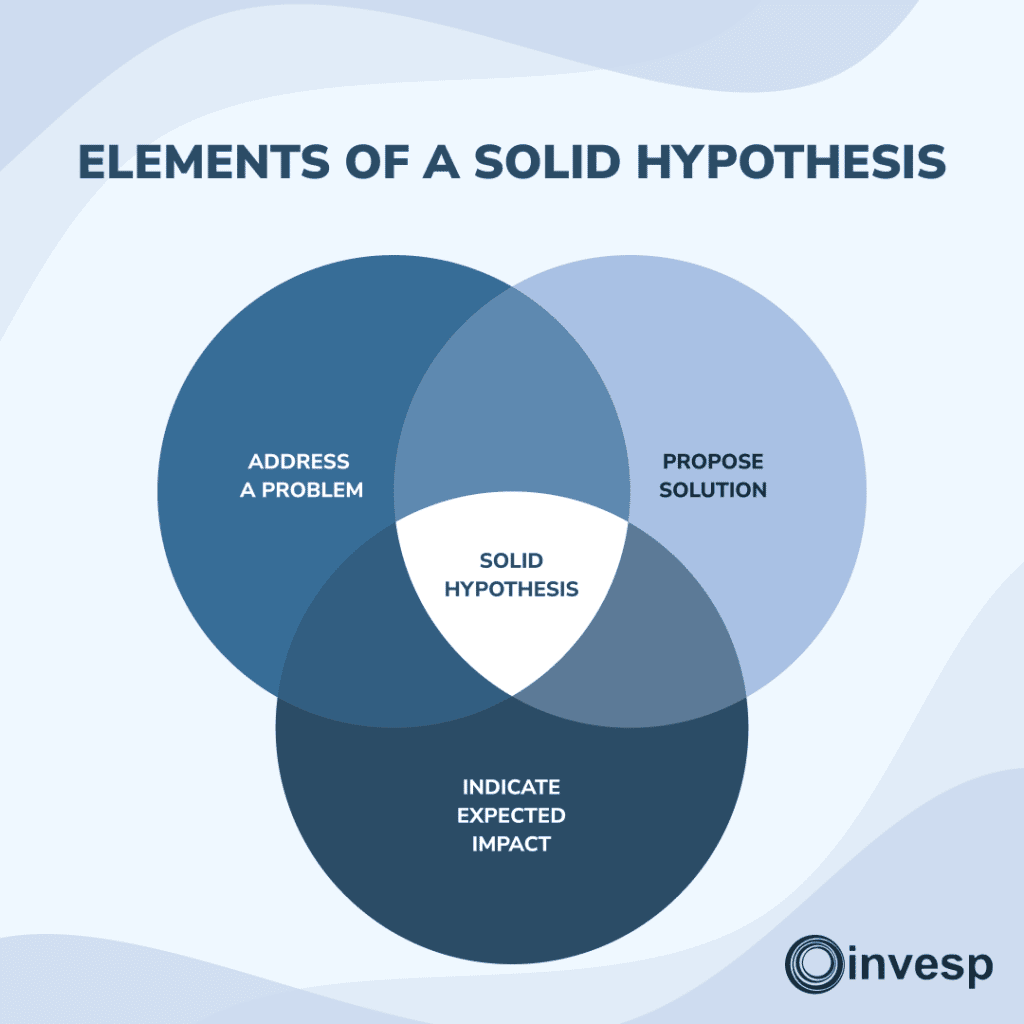

Usually, every A/B test is backed by a solid hypothesis. A hypothesis is an educated guess about why you’re getting particular results on a web page and coming up with solutions to improve those results.

Typically, your hypothesis should address a problem, propose a solution, and indicate the expected impact of implementing the solution.

When choosing the elements to test, it is important to focus on those most likely to impact the goal of your A/B test.

For example, suppose your goal is to increase conversions through your landing page. In that case, you should focus on testing elements directly related to that web page’s conversion process.

Choosing elements to test becomes less challenging if you have a strong and valid hypothesis.

-

Creating the variation groups

After choosing the elements to test in your A/B test, the next step is creating the variation(s) that will be used in the test.

This typically involves creating two or more versions of your website, one representing how the website currently looks and functions, and testing them against the control version.

When creating the variations, it is important to make only one change at a time to accurately determine its impact on the goal of your A/B test.

For example, if you are testing a website’s layout, you would create different versions of the website, one with the current layout and others with a different layout, but all other elements of the website would remain the same.

-

Setting up the tracking and reporting

Once you have created the variations for your A/B test, the next step is to set up tracking and reporting to measure the performance of your experiment.

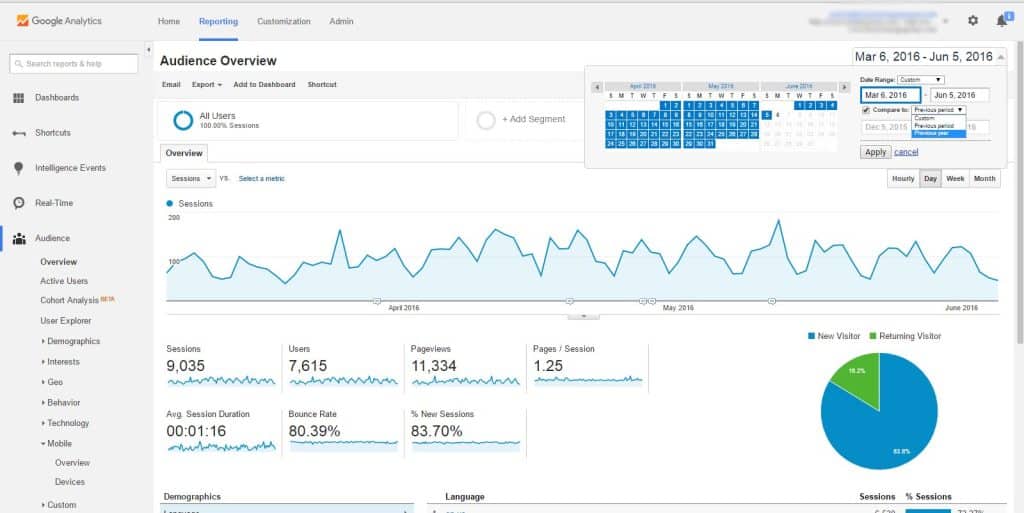

This typically involves using a tool, such as Google Analytics, or other analytics tools to track key metrics related to the goal of your A/B test.

For example, if your goal is to increase conversions, you would set up tracking to monitor the number of conversions for each website variation.

This will allow you to compare the variations’ performance and determine which is more effective at achieving your goal.

Although most A/B testing tools also have features for tracking metrics, it’s wise to integrate an external tracking tool like Google Analytics.

Integrating an A/B testing tool with Google Analytics can provide several benefits:

- Improved data accuracy: By integrating the two tools, you can more accurately track and measure the impact of your A/B tests on key metrics such as conversions, traffic, and user engagement.

- Enhanced data visualization: A/B testing tools often provide their dashboards and reports. However, integrating with Google Analytics lets you view your test data alongside other important business metrics in a single platform.

- Streamlined data analysis: Integrating your A/B testing tool with Google Analytics allows you to use Google Analytics’ powerful analytics and reporting features to analyze and interpret your test data.

- Increased flexibility: Integration with Google Analytics allows you to use the full range of customization and segmentation options available in the platform to analyze your test data.

-

Running the A/B Test.

Now that you have defined your goals identified the elements you want to test and set up your tracking and reporting tools, it’s time to get to the main business: running the A/B tests.

But before that, It is important to perform quality assurance (QA) before launching an A/B test for several reasons:

- To ensure that the test is set up correctly, Performing QA before launching an A/B test can help you identify and fix any issues with the test setup, such as incorrect targeting or tracking of test metrics.

- To avoid disruption to the user experience: If there are issues with the test setup or implementation, it can result in a poor user experience or even broken functionality. Performing QA before launching the test can help you avoid these issues and ensure that the test does not negatively impact the user experience.

- To minimize the risk of data loss or corruption: If there are issues with the test setup or implementation, it can result in incorrect or missing data, which can compromise the reliability of the test results.

-

Launching the test.

This is the stage where your chosen A/B Testing tool gets to work. These tools are designed to make it easy to set up and run A/B tests, and they typically provide a range of features and capabilities to help you get the most out of your A/B test.

With your A/B testing tool, you make the variations of your website available to users and direct a portion of your traffic to each variation. The goal of your A/B test typically determines the sample size directed to each variation.

Once you’ve launched your test, you can use the A/B testing tool to monitor the variations’ performance in real-time.

At this stage, there’s an important question: “How long should an A/B test run?”

The duration of an A/B test depends on some factors such as sample size, statistical significance, site traffic, etc. However, experts recommend that tests be run for at least two weeks to get accurate results representative of your sample size.

Also, by running the test for at least two weeks, you can account for the different days of the week customers interact with your website.

-

Collecting and analyzing data

After launching your test and monitoring the progress until the test is over, the next step is gathering and analyzing data from the test.

Most A/B testing tools have this feature that analyzes the results and shows you how each variation performed based on the defined metrics.

During analysis of the results, you can also gain valuable insights into improving your product or service and driving better results.

FigPii can also provide recommendations or best practices for incorporating the findings from your A/B test into your product development process.

-

Interpreting the results.

This is where you can get it wrong and misinterpret your results, especially if your tests’ goals and success metrics were not clearly defined beforehand.

When interpreting the results of an A/B test, it is important to consider these factors:

- Test Duration: The running time of your tests should be considered when analyzing the results. In most cases, the lower the site traffic, the longer your tests should run.

- Number of Conversions per variation.

- Results Segments based on how much traffic, visitor type, and device

- Internal and External factors

- Micro-conversion data

- Statistical Significance Level: A high level of statistical significance indicates that the observed differences between the two user groups are unlikely to have occurred by chance.

- Sample Size: A large sample size ensures that the results are representative of the general audience.

Tips for designing effective A/B Tests

- Quality assurance is a success factor in A/B testing. Before running the tests, QA helps to ensure that your website is functioning as expected and that there are no errors until the tests are over.

- Clearly define the goals of the A/B tests. What are you trying to achieve or improve on your website with the test? This helps you focus on only things that are relevant to your goals.

- Choose the right metrics to measure the success of the test. What are the KPIs that you want to track?

- No matter what you decide to alter or change on different web pages on your site, ensure that, it doesn’t negatively affect the user experience.

- Choose a testing tool with the features required to run your tests effectively. Some features to look out for in A/B testing tools include customer support, multivariate testing, split-URL testing, advanced targeting, revenue impact reports, etc.

Conclusion and next steps

Let’s recap some of the critical points in this article;

A/B testing is an excellent process for determining which version of your website, product, or marketing efforts will help you achieve your business goals effectively.

To effectively run an A/B test, you should choose an A/B testing tool that gives you control and freedom on how to set up your tests how you want. It’s also important to

- Define your testing goals

- Choose elements to test

- Create multiple site variations

- Monitor the tests

- Gather and analyze test results.

How to continue learning and improving with A/B Testing

After conducting an A/B test, future tests, and further analysis may be necessary to understand the reasons for the observed differences and determine if the changes should be implemented permanently.

Combining A/B tests with other optimization techniques, such as user feedback and usability testing, can also improve the quality of the results and help businesses better understand how to improve their products and services.